The AI revolution is here and it is quickly becoming clear that businesses that adopt artificial intelligence have much to gain.

Spacetime is a young artificial intelligence company with big dreams of helping Kiwi enterprises and government benefit from this rapidly advancing technology.

Founded by AUT alumnus Alex Bartley Catt in 2017, Spacetime is already working on several government projects and counts the likes of Amazon Web Services, Google Cloud and IBM Watson as partners. Insight talked to Bartley Catt about Spacetime, some of the exciting things we can do with AI and concerns surrounding this breakthrough technology.

“Design thinking always comes back to people, and that’s really important to me. This has helped build a company that is centred around people. But the real promise of the technology is actually solving specific problems at scale, so to find that scale, I started Spacetime and targeted New Zealand enterprise and government.”

AI is quite a broad term - what does it mean in the context of your company?

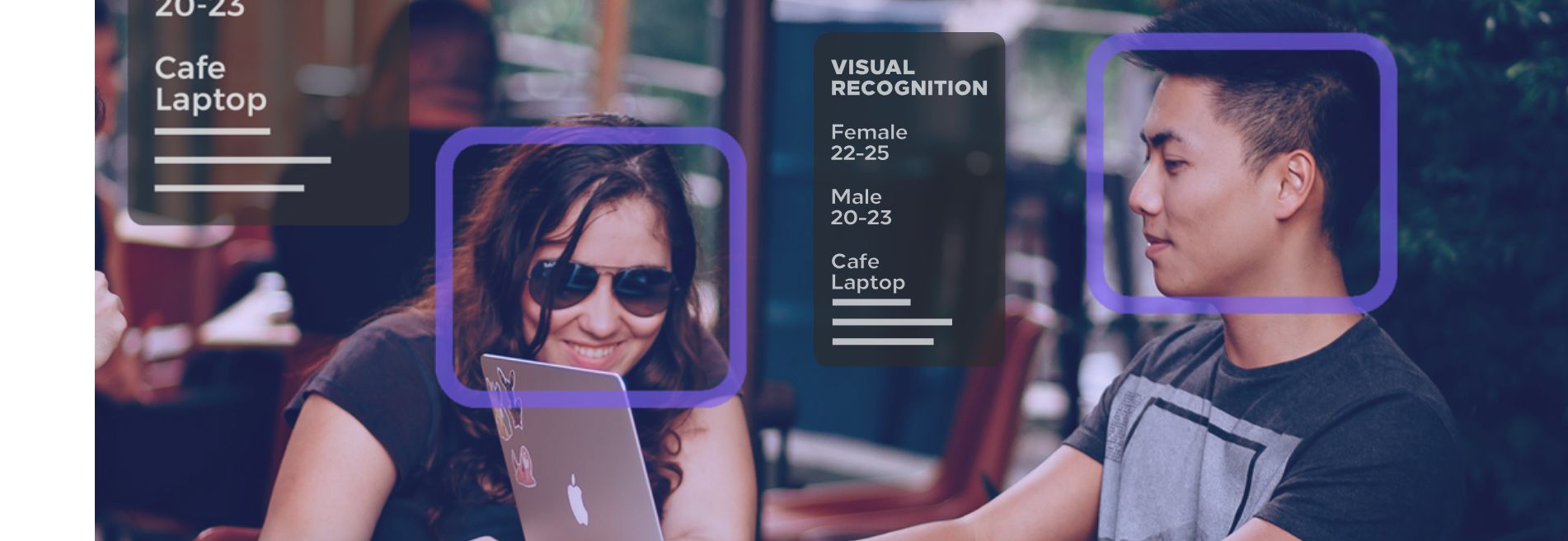

Most define AI today as creating machines that simulate intelligent or human-like behaviour, or in other words, machines that can work, think and react like humans. When we talk about AI at Spacetime, we’re referring to a specific set of software provided by the likes of IBM, Google, Microsoft, and Amazon which can be trained to do a number of things that we can do. For example, AI systems can be trained to recognise objects or people in images, or even extract sentiment or tone from written text, for example, comments on social media or internal emails. A lot of this technology is known as natural-language understanding which is computers inferring or ‘understanding’ what a person actually means, and not just the words they say or write. The real power of these technologies lies in being able to train these AI systems to fit the specific needs and context of an organisation.

How can Spacetime help the government and New Zealand enterprises use AI to improve customer experience?

We talk about customer experience as it’s a powerful way to interrogate the interaction between organisations and their users. More broadly, because some organisations don’t have ‘customers’, we talk about experience design. All of these terms can get confusing, but the focus is solving problems for people. So we start with methods and tools from design thinking to help organisations understand where the opportunity for AI technology lies.

This includes journey mapping, interviewing and roleplaying. Once we have an understanding of the problem, we present organisations with a roadmap outlining a plan to take advantage of AI. While this roadmap is always different and specific to the organisation and its users, we’re finding that some solutions are providing great value. We place these solutions into three categories - insight, interaction, and automation.

An example of improved insight would be natural-language understanding. Some organisations have huge volumes of unstructured data – often in the form of written text like social media comments – which we can interpret and gain insight from. To use AUT as an example, we could crawl social media for all comments related to the university and build a real-time dashboard to help understand the overall sentiment towards AUT. The insight gained here could help AUT manage public relations and understand the effectiveness of their advertising or marketing.

What inspired you to start a company in the AI space?

I co-founded a digital marketing agency called Tips in 2016 to help small to medium-sized Kiwi businesses take better advantage of digital technologies. The problems we were trying to solve for our clients became more and more specific, so I started to research how newer technologies could help. I came across IBM and Google’s AI offering and was amazed by its potential. Based on the amount of money being spent on AI at the time, it was clear these large tech companies were taking this seriously. I could see my digital marketing clients benefitting from these AI technologies, and some of them currently are. But the real promise of the technology is actually solving specific problems at scale, so to find that scale, I started Spacetime and targeted New Zealand enterprise and government.

How did a degree in business (majoring in advertising and design thinking) at AUT give you an edge in what you are doing?

Design thinking, and in fact advertising, always comes back to people, and that’s really important to me. It’s about understanding the problems that exist for people and coming up with a solution that works for them. For me, design thinking has helped me to build a company that is centred around people. Two key values in design thinking are empathy and collaboration. Empathy is all about understanding one another and ourselves. Collaboration is about bringing people together with different skills and perspectives. I think both of these values help us create amazing things with the people we work with.

What are your future plans for Spacetime?

What came out of early business planning and research was the insight that while AI tech itself is not new, the application of the software to business and people’s problems is. For this reason, the strategy is to start by consulting with as many Kiwi organisations as possible, looking to understand exactly which problems we are facing, and how the technology can be applied. When we start to see common, reproducible applications of the technology, we will consider productisation and scaling distinct solutions. We are only a year into this journey and can already see some use cases providing more value than others.

There are many concerns surrounding AI, especially around privacy. What is your take on this?

While conversations on AI can often lead down the line of killer robots and technological singularity, they are not the issues staring us right in the face today - privacy is. This problem is effectively solved through considered, ethical design, but the problem is that larger companies aren’t always interested in putting in the effort. I’d say stay informed and be ready to speak up. It’s my intention to use this technology ethically, but we can’t expect that of every company, government, and lone developer. The reality is, the technology itself is not dangerous. The way we decide to use it could be, and it’s up to society as a whole to keep tabs.

Website: spacetime.co.nz